AI platforms are rapidly becoming part of the discovery journey. Buyers are asking ChatGPT, Claude, and Google AI for recommendations across categories ranging from software and agencies to consumer products. As a result, AI visibility tracking is climbing the marketing agenda. The critical question is how reliably that visibility can be measured.

New research from SparkToro, conducted in partnership with Gumshoe, offers some of the clearest evidence to date on how AI recommendation systems behave. The findings do not undermine AI tracking, but they do reshape how marketers should approach it.

AI Recommendation Lists Are Highly Variable

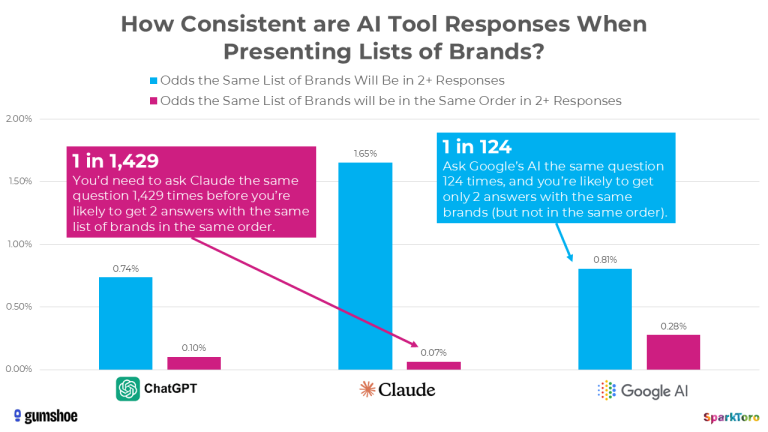

SparkToro’s study set out to test a foundational assumption: if you ask an AI for brand or product recommendations repeatedly, will you receive consistent results?

To answer that, 600 volunteers ran 12 prompts across ChatGPT, Claude, and Google AI a combined 2,961 times. Responses were normalised into ordered brand lists for analysis.

The results were striking:

-

The exact same list of brands appeared in fewer than 1 in 100 runs

-

The same list in the same order appeared in fewer than 1 in 1,000 runs

-

The number of recommendations varied significantly between responses

Across sectors including chef’s knives, digital marketing consultants, SaaS cloud providers, and cancer care hospitals, list composition and order changed constantly.

That variability is not a system failure. Large language models are probabilistic by design. They generate responses based on patterns in their training data, meaning variation is expected.

However, the implications for marketers are significant.

Ranking Position Is Not A Reliable Indicator

SparkToro’s analysis makes one point particularly clear: tracking ranking position inside AI responses is unstable.

Even in relatively narrow categories with limited options, order fluctuated dramatically from run to run. A brand might appear first in one response, third in another, and absent in the next.

For marketing teams accustomed to search engine rankings, this requires a mindset shift. AI-generated lists are not fixed hierarchies. They are sampled outputs from a broader pool of likely candidates.

If a CEO shares a screenshot showing your brand below a competitor in ChatGPT, that single output does not represent a fixed reality. According to SparkToro’s data, repetition would almost certainly produce a different order.

In many cases, the situation is less concerning than a one-off example suggests.

Visibility Percentage Shows Stronger Signals

Although rankings proved inconsistent, the research uncovered a more promising metric: visibility percentage.

When prompts were repeated dozens of times, certain brands appeared far more frequently than others. For example, in one tested category, a leading entity appeared in 85 out of 95 responses from Google AI. In another case, a West Coast cancer hospital appeared in 69 of 71 ChatGPT responses, equating to a 97 percent visibility rate, despite not consistently ranking first.

Gumshoe’s platform analysed these repeated runs and visualised how often brands appeared across AI responses. The data suggests that frequency of inclusion is far more stable than position.

Visibility percentage reflects whether a brand is consistently part of the AI’s consideration set for a given intent. That offers marketers a more statistically grounded way to assess presence.

Prompt Diversity Is A Major Variable

Another key finding from the SparkToro and Gumshoe collaboration relates to how people write prompts.

When 142 participants were asked to create prompts about selecting headphones, semantic similarity across prompts was extremely low. Users expressed the same intent in highly varied ways.

Despite that variation, when those prompts were run multiple times, a relatively consistent group of leading headphone brands appeared between 55 and 77 percent of the time.

That result is important. It indicates that even though prompts differ significantly in wording, AI systems often capture the underlying intent and draw from a similar pool of associated brands.

However, it also highlights a challenge for tracking tools. Measuring AI visibility using a small, fixed set of prompts will not reflect real-world behaviour. Broad and diverse prompt sets are essential for meaningful analysis.

Category Size Shapes Outcomes

SparkToro’s data also revealed that category breadth affects consistency.

In tightly defined markets with limited viable brands, visibility percentages clustered more tightly. In broader sectors with many credible options, recommendation sets were more diverse and visibility percentages spread more widely.

Context therefore matters. A 35 percent visibility rate in a fragmented, competitive market may represent strong performance. The same percentage in a narrow niche may signal something different.

Marketers should always interpret AI visibility metrics within the structure of their category.

Practical Takeaways For Marketing Teams

The combined SparkToro and Gumshoe findings point to several clear actions:

-

Stop relying on single screenshots as evidence of performance

-

Avoid using ranking position as a primary KPI

-

Measure visibility across diverse prompts, repeated multiple times

-

Ask vendors for methodological transparency, including sample size and statistical treatment

If leadership is questioning why your brand appears below a competitor in a single AI response, the data provides helpful context. AI outputs vary by design. A broader sample may show strong overall inclusion, even if position fluctuates.

Shifting the conversation from “Where do we rank?” to “How frequently do we appear?” leads to a more informed and constructive discussion.

A More Mature Approach To AI Measurement

SparkToro’s research does not suggest that AI visibility tracking is futile. On the contrary, it indicates that, when measured properly, visibility percentage can offer useful directional insight.

What it does challenge is simplistic ranking-based reporting.

Generative AI is reshaping discovery. Marketing measurement frameworks must adapt to probabilistic systems rather than deterministic lists. Brands that embrace statistical rigour, demand transparency, and educate stakeholders on how AI outputs work will be better positioned to navigate this evolving landscape.

The opportunity is real. The measurement approach simply needs to match the complexity of the technology.

RECOMMENDED FOR YOU

Meta Opens Ad Tools To ChatGPT & Claude

Meta is expanding its AI advertising strategy beyond its…

Meta is expanding its AI advertising strategy beyond its…

Google Pushes Search Further Into AI

Google unveiled a sweeping set of AI Search updates…

Google unveiled a sweeping set of AI Search updates…

Marketing Leads The Global AI Adoption Curve

Artificial intelligence adoption is accelerating across businesses worldwide, with…

Artificial intelligence adoption is accelerating across businesses worldwide, with…